Instruct-NeRF2NeRF is a technique developed by researchers from UC Berkeley that allows for the modification of 3D NeRF (Neural Radiance Fields) scenes using written instructions. This method is designed to make 3D editing more accessible and approachable for users, especially those who are not experts in 3D modeling.

The traditional process of manually sculpting, extruding, and retexturing 3D models requires specialized tools and years of skill, making it significantly more complicated than the process of capturing a 3D scene using neural reconstruction techniques. However, 3D editing tools that are specifically designed for contemporary 3D representations are still in their infancy.

Instruct-NeRF2NeRF addresses this gap by providing a technique that can modify 3D NeRF scenes in a 3D-consistent manner using written instructions. This approach leverages the 3D scene that has already been captured, making it easier to make changes to the scene without having to manually recreate the entire model.

As 3D content becomes more prevalent, techniques like Instruct-NeRF2NeRF will become increasingly important in enabling non-experts to modify and create 3D assets. This will ultimately help to democratize the creation of 3D content and make it more accessible to a wider audience.

Prompts in Instruct-NeRF2NeRF

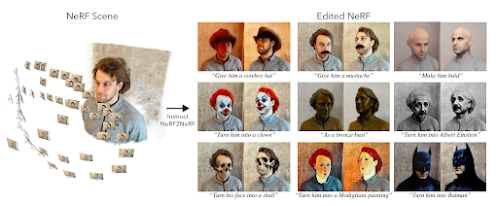

Instruct-NeRF2NeRF enables a range of changes to a 3D scene using flexible and expressive language instructions, such as "Give him a cowboy hat" or "Make him become Albert Einstein." These instructions can be given for a 3D scene capture of a person, for example, as shown in Figure 1 (left).

To enable this, the method uses a 2D diffusion model to extract form and appearance priors, rather than a 3D generative model. This is because more data sources are needed to effectively train a 3D generative model. The researchers specifically use the instruction-based 2D image editing capability offered by InstructPix2Pix, a recently developed image-conditioned diffusion model.

By leveraging these techniques, Instruct-NeRF2NeRF makes 3D scene modification simple and approachable for regular users, even those who are not experts in 3D modeling. This will be an important development as 3D content becomes more prevalent and democratizing the creation of 3D content becomes increasingly important.

Iterative Dataset Update (Iterative DU)

However, when using Instruct-NeRF2NeRF on specific photos generated using reconstructed NeRF, the changes may appear uneven for different angles. To address this issue, the researchers developed a straightforward technique comparable to current 3D-generating systems like DreamFusion. They call their technique Iterative Dataset Update (Iterative DU), which involves alternating between altering the "dataset" of NeRF input photos and updating the underlying 3D representation to include the changed images.

The researchers tested their technique on a range of NeRF scenes and verified their design decisions through comparisons with ablated versions of their methodology and naive implementations of the score distillation sampling (SDS) loss suggested in DreamFusion. They also qualitatively contrasted their strategy with an ongoing text-based stylization strategy, showing that various modifications can be made to individuals, objects, and expansive settings using their technology.

By addressing the issue of uneven changes for different angles, the Iterative DU technique improves the effectiveness of Instruct-NeRF2NeRF in modifying 3D NeRF scenes. This will be an important development in enabling non-experts to create and modify 3D content with greater ease and accuracy.

Comments

Post a Comment