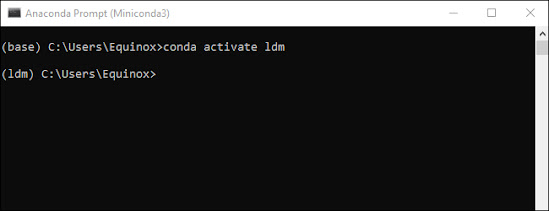

In a previous article, we showed how to prepare your computer to be able to run Stabe Diffusion, by installing some dependencies, creating a virtual environment, and downloading Stabe Diffusion from Github. In this article, we will show you how to run Stable Diffusion and create images. First of all, You must activate the ldm environment we built previously each time you wish to use stable diffusion because it is crucial. In the Miniconda3 window, type conda activate ldm and press "Enter." The (ldm) on the left-hand side denotes the presence of an active ldm environment.

Note: This command is only necessary while Miniconda3 is opened. As long as you don't close the window, the ldm environment will be active.

Before we can generate any images, we must first change the directory to "C:stable-diffusionstable-diffusion-main.":

cd C:stable-diffusionstable-diffusion-main.

How to Create Images with Stable Diffusion

We're going to use a program called txt2img.py to turn text prompts into 512x512 images. Here's an illustration. To check that everything is operating properly, try this out:

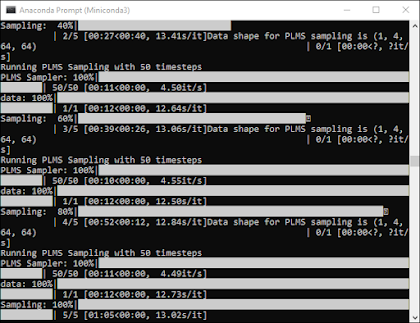

python scripts/txt2img.py --prompt "a close-up portrait of a cat by pablo picasso, vivid, abstract art, colorful, vibrant" --plms --n_iter 5 --n_samples 1your Conda Console should be something like this:

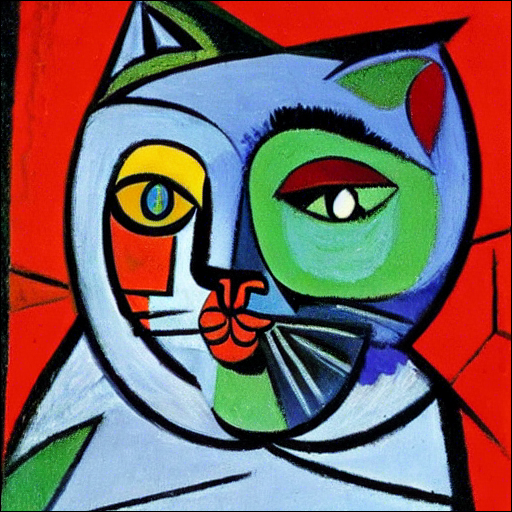

The output of that command is five cat images, all of which can be found at "C:stable-diffusionstable-diffusion-mainoutputstxt2img-samplessamples."

Although it isn't perfect, it clearly has Pablo Picasso's aesthetic, as we stated in the prompt. Your images should resemble one another, but not necessarily.

Simply alter the text in the double-quotation marks that follow --prompt to change the generated image whenever you want.

python scripts/txt2img.py --prompt "YOUR, DESCRIPTIONS, GO, HERE" --plms --n_iter 5 --n_samples 1Wrapping Up

In this article, we showed how can someone run Stable Diffusion by installing it on their Computer and running it in their own Environment. Although it involves many steps, it is yet simple and straightforward.

Finally, getting fantastic results requires a lot of trial and error, but this is at least half the pleasure. Make sure to note or preserve the arguments and descriptions that produce the outcomes you prefer. There are great communities on Reddit (and elsewhere) devoted to exchanging images and the prompts that produced them if you don't want to do all of the experiments yourself.

Comments

Post a Comment